Legal AI: High on Artificial, Low on Intelligence

This article was originally published in November, 2017.

Read the updated article: Steak, muffins and chihuahuas: The unkept promise of Legal A.I.

Legal AI: High on artificial, low on intelligence

‘People overestimate the impact of AI in the short term, and underestimate the impact of AI in the long term’. – Richard Susskind

Everybody’s talking about it. Every week there is another Law Firm announcement of an ‘A.I. breakthrough’. And no one wants to be left behind.

Yet the truth, for the most part, is that these claims regarding artificial intelligence in the Legal industry are ‘all tip, no iceberg’. For all the hype, no one can find a lawyer who can point to tangible ROI from legal A.I.

As one veteran Legal Technology CEO said to us recently ‘law firms seem to be using A.I to generate press releases, not Legal work’. Another ‘A.I vendor’ we know openly admits they target the marketing budget, not IT budget, within law firms.

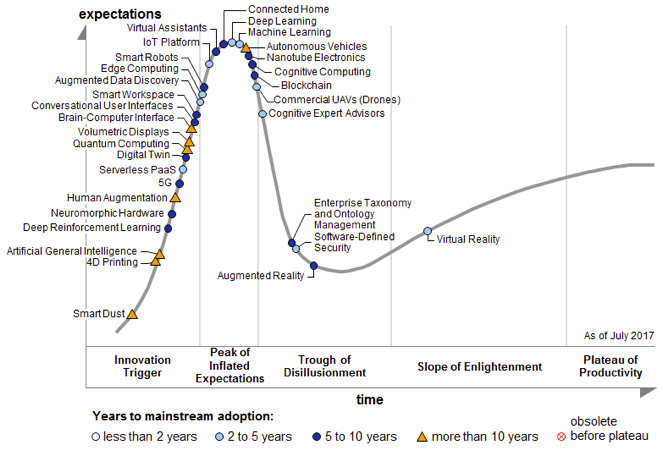

Partly as a result of this ‘peak of inflated expectations’ the Legal industry is in what research firm Gartner calls the A.I Hype Cycle. If history is any predictor of the future, we will shortly enter an A.I Winter.

We shouldn’t be surprised, experts suggest this will be the sixth A.I Hype Cycle since A.I was first developed in 1956.

Yet, this Hype Cycle is different to all others in one key way:

Artificial Intelligence is working very effectively in many domains. Indeed, we use it every day.

So why is A.I. in the legal industry different?

There is a simple and a complex answer to this question.

The simple answer is that many vendors are misrepresenting their technology as A.I. - making the relationship between artificial intelligence and law questionable in the eye of consumers.

Take the example of ‘LISA’ which has been publicised as the ‘The world’s first Artificially Intelligent Lawyer’. The truth is this is neither A.I. nor the first attempt at an A.I. lawyer. In fact, it is just a ‘white-labelled’ contract automation product not futuristic Legal A.I software. Perhaps most amusingly, using artificial intelligence in Law Firms to draft an NDA would be the technology equivalent of using a nuke to kill an ant.

As the CEO of Oracle said on CNBC recently, ‘When companies claim they're in A.I., 'most of the time it's just nonsense'.

Given the frequency of false claims, it is understandable that well-informed GCs are already entering Gartner’s ‘Trough of Disillusionment’. This is a shame. Legal A.I. software and tools will have a profoundly positive impact on the legal industry in the years to come. Investing in it will be critical to the advancement of the profession.

As this Fortune article suggests:

“The problem with AI as subject matter is that the companies behind it and journalists covering it (guilty here) fall into the trap of extolling the technology as the greatest (or scariest) ever. And then the inevitable reality is just, well, underwhelming.”

To understand why we aren’t there yet you must understand the complex answer - what A.I. is and why, in most legal applications, it’s not effective…yet.

Understanding A.I.

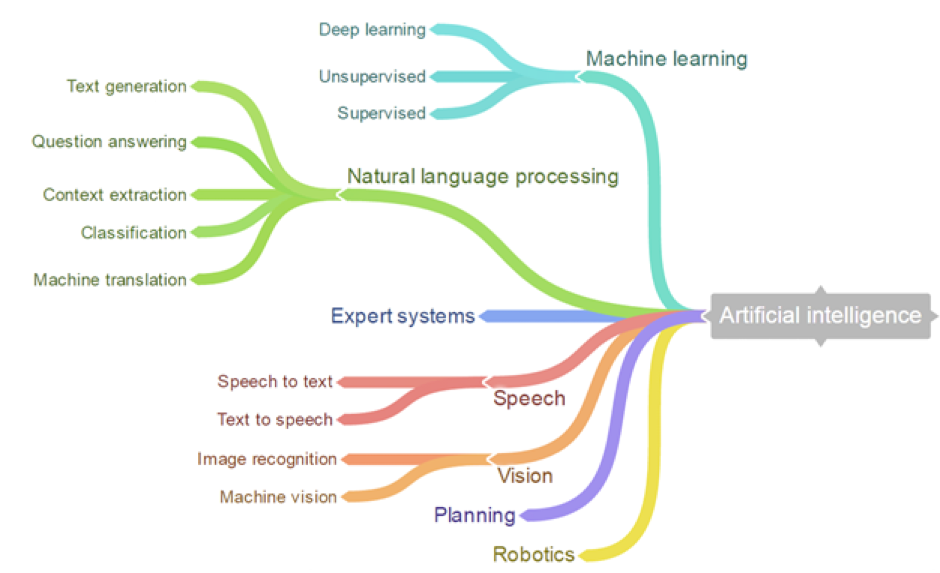

Much like human intelligence, there are many different types of Artificial Intelligence. Yet to simplify, to be A.I., a system must ‘learn’ and/or 'recognise'– hence ‘Machine Learning’ is the most prominent arm of A.I.

The challenges in the legal domain is to replace an in-house lawyer A.I. needs to leverage not one but two branches of A.I.:

- ‘Natural Language Processing’ (e.g. reading)

- Machine Learning (e.g. reasoning).

However, unlike many other applications of A.I., to replace a lawyer the A.I. software needs to do this with a very, very low tolerance for error.

For example: If Facebook’s A.I. shows you an image of a cute cat and you are not interested in the implications are reasonably limited. On the other hand, a slight change in the wording of an agreement could cost millions. Hence, Facebook could get away with say 60% accuracy in predicting cute cats while Legal A.I. software needs to demonstrate near perfect accuracy for it to be widely adopted.

But this is only part of the problem. For A.I. to ‘learn’ the engine needs enormous amounts of reasonably well-structured data. The smaller, heterogeneous, or unstructured the dataset, the less effective the Legal A.I. engine will be.

Not only is there a huge variance in contracts, the dataset is comparatively small, highly fragmented, and for the computer to ‘read’ the agreements and intuit meaning it requires the second A.I. approach – Natural Language Processing (‘NLP’).

This is not trivial. In NLP, you are, in a sense, trying to teach a computer a second language. As anyone who has taught English to someone else can tell you - language is not exactly rational and we all express the same thing differently.

Still doubt me? Consider IBM’s much-hyped Watson platform. After spending billions, it is still yet to demonstrate any true breakthroughs for lawyers. Not even IBM’s legal function has found a use for it.

So, A.I. doesn’t work in the Legal Industry?

No. It’s not that simple. There is one great example of A.I. making headways in the legal industry. ‘Predictive Coding’ is saving clients tens of millions of dollars a year in litigation, and lawyers from millions of hours of soul-destroying discovery.

At Plexus, we use a version of this technology to power search within our platform. However, unlike less scrupulous players, we feel marketing it as an ‘A.I. platform’ would be misleading to our clients.

So why does it A.I. work in legal search and not everywhere?

As you might guess, it’s a lot easier for A.I. to find the words ‘smoking’ and ‘gun’, or ‘change’ and ‘control’ in vast amounts of contracts, than it is to understand how a clause is affected by special provisions and definition and how this aligns with an organisations risk tolerance.

What can you learn from all of this?

In the short-term, clients should expect to be disappointed by promises of A.I. In the long term, they should expect to be astounded. The combination of vast quantities of capital, research and computing power being directed at the development of A.I. mean the field is advancing incredibly quickly.

However, given the challenges above it would be a fair bet that we can expect to see driverless cars on our roads before we see law and artificial technology going hand in hand, with A.I. genuinely powering legal functions.

In the interim, GC’s time and money would be far better spent on proven solutions like legal automation, work-flow tools, and contract-lifecycle management rather than waiting for Legal A.I. software to deliver a silver bullet.

The home of your team’s contracts and legal work

Plexus helps everyone in your business execute legal tasks with certainty and speed. Build self-service workflows for routine legal tasks, accelerate your contract lifecycle, and bring clarity, transparency and swift resolutions to legal matters.

Request a call-back

One of our consultants will be in touch ASAP to answer your questions and determine your requirements.

Want to speak to someone instead? Call us on 1300 983 907

Thank you

One of our consultants will reach out to you shortly.

Subscribe

Get weekly legal transformation best practices, benchmarks and trend analysis.

Thanks for subscribing