58.7% of legal teams are adopting AI. Here are the 6 specific barriers stopping them from making it work

Fifty eight point seven percent of in-house legal teams are actively adopting AI. That number sounds like progress. It is, until you look at what sits alongside it.

Andrew Mellett

May 12, 2026

CONTENTS

or

Only 6.7% of those same teams have fully operationalised an AI enabled operating model.

That gap, between teams that are doing something with AI and teams that have actually made it work, is where most legal transformation efforts are quietly stalling. The technology is not the problem. Thomson Reuters’ global survey of 2,275 legal professionals found that only 22% of organisations even have a defined AI strategy. For the rest, enthusiasm is standing in for a plan.

Understanding why operationalisation is so hard, and what the teams who break through actually do differently, is the most practical conversation in legal right now. Based on data from the Plexus Future-Ready General Counsel 2026 report, which surveyed 150 General Counsels across four regions, six barriers come up again and again.

Here is what each one is, why it stalls implementation, and exactly what high performing teams do to get past it.

Barrier 1: Data quality and readiness

Cited by 54% of GCs as a primary barrier to AI adoption

What it is

AI systems do not create structure from chaos. They require clean, consistent, accessible data to perform reliably. For most in-house legal teams, that data simply does not exist in a usable form. Contracts live across shared drives, email threads, personal folders, and legacy systems. Naming conventions are inconsistent. Metadata is missing. Version control is non-existent.

Why it stalls teams

When teams begin AI implementation, particularly contract review or knowledge management, they quickly discover that the AI is only as good as what it can find and read. If documents are scattered and unstructured, outputs become unreliable. Teams lose confidence, pilots stall, and the perception sets in that AI does not work for legal. The real problem is not the AI. It is the data infrastructure it was given to work with.

What high performing teams do

They treat data remediation as the first phase of AI implementation, not an afterthought. Specifically:

• Conduct a data audit before selecting a platform. Map where every category of legal document currently lives, who owns it, and what format it is in.

• Centralise before automating. Move documents into a single system with consistent folder structures, document types, and metadata before asking AI to do anything with them.

• Start narrow. Instead of attempting to organise all legal data at once, high performing teams pick one document type, NDAs are the most common starting point, and build clean data infrastructure around that category first.

Platforms like Plexus that centralise legal work across contracts, matters, and approvals in one system create the data foundation that makes AI reliable from day one, rather than requiring years of retrospective clean up.

Barrier 2: AI accuracy and reliability concerns

Cited by 52% of GCs as a primary barrier

What it is

Lawyers are trained to distrust unverified output. AI hallucinations, where a model generates plausible-sounding but factually incorrect information, are a genuine risk in a profession where errors carry legal consequences. Many legal teams have had negative early experiences with consumer AI tools that produced confident but wrong answers, creating lasting scepticism.

Why it stalls teams

Accuracy concerns tend to manifest as blanket opposition to AI rather than targeted risk management. Instead of identifying which use cases carry low risk and trialling those, teams apply the highest-risk scenario (AI giving incorrect legal advice) to every possible use case (AI summarising a standard NDA). The result is paralysis. Nothing gets implemented because everything feels risky.

What high performing teams do

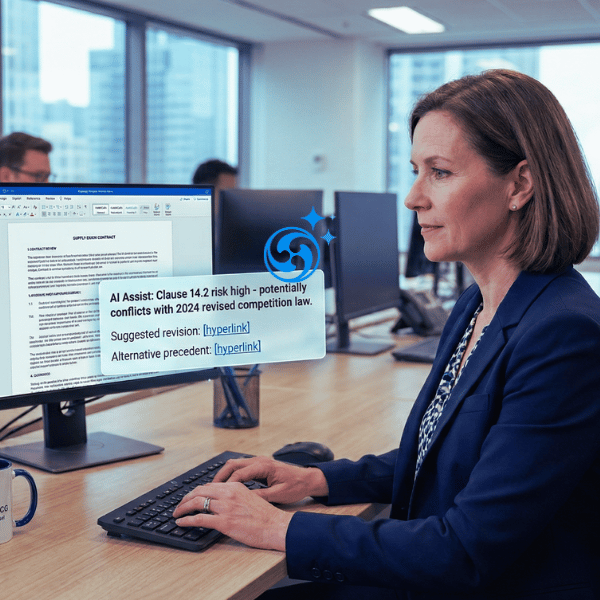

They sequence AI adoption by risk level, not by ambition. The data from 150 GCs is clear: 94% of active AI users start with contract review and drafting. Not because it is the most exciting use case, but because it is the lowest risk one. A lawyer reviews the AI output before it is used. The AI speeds up the work; it does not replace the judgement.

• Define what “accuracy” means for each use case before implementation. For document summarisation, 90% accuracy may be acceptable. For external legal advice, it is not.

• Build human review into the workflow explicitly, not as a sign of AI failure, but as the designed standard for the first phase.

• Track accuracy metrics over time. Teams that measure and document AI performance build confidence through evidence, not assumption.

Barrier 3: security and data confidentiality

Cited by 40% of GCs as a primary barrier

What it is

Legal documents contain some of the most sensitive information in any organisation: M&A terms, litigation strategy, personal employee data, commercially sensitive contracts. Uploading that data to a cloud AI system raises legitimate questions about where it is stored, who can access it, whether it is used for model training, and what happens in a breach scenario.

Why it stalls teams

Security concerns often arrive as a procurement or IT veto rather than a solvable problem. By the time the GC is ready to move, a data security review flags risks, procurement adds criteria, and the implementation timeline extends indefinitely. Meanwhile, the business units that generated the need for legal AI in the first place have moved on.

What high performing teams do

• Establish security requirements as a starting brief. Before evaluating any platform, document the organisation’s non-negotiable data requirements: data residency, encryption standards, SOC 2 compliance, no training data use clauses.

• Involve IT and InfoSec early. Teams that bring IT into the conversation in the first month of exploration avoid the late-stage veto. Those that treat IT as a final sign-off hurdle consistently hit it.

• Select platforms built for legal-grade confidentiality. Enterprise legal platforms purpose-built for in-house use, with dedicated tenancies, audit trails, and explicit data isolation, which address security concerns at the architecture level rather than through contract workarounds.

Barrier 4: system integration complexity

Cited by 36% of GCs as a primary barrier

What it is

Most legal teams already use a collection of tools: document management systems, eSignature platforms, matter management software, email. Introducing AI into that environment requires it to connect with existing systems, or the workflow breaks. Data lives in one place, AI lives in another, and lawyers end up doing manual transfers that eliminate the efficiency gain.

Why it stalls teams

Integration complexity is where many well resourced implementations stall. A team selects a promising AI tool, begins implementation, and discovers that connecting it to existing systems requires custom API development, IT resourcing, and timelines measured in months. The original urgency dissipates. The project is deprioritised.

What high performing teams do

• Audit existing systems before vendor evaluation. Understand what APIs or integrations are already available and what custom development would be required.

• Prioritise platforms with prebuilt integrations for the tools the legal team already uses, particularly email, document storage, and eSignature.

• Accept a degree of workflow change. Some of the most effective implementations involve simplifying the tool stack rather than integrating everything. Moving from five systems to two is often faster and more effective than building bridges between all five.

Platforms built as unified legal operating systems, rather than point solutions layered onto existing infrastructure, eliminate most integration complexity by design.

Barrier 5: budget and resourcing constraints

Cited by 32% of GCs as a primary barrier

What it is

Legal technology investment requires budget approval. For most GCs, that means a CFO conversation, and CFOs, who rank enterprise-wide cost optimisation among their top five priorities in 2026 (Gartner), are not receptive to technology spend framed as an innovation initiative. Legal departments also typically lack dedicated legal operations or technology implementation resource, meaning someone already doing a full time job has to absorb the implementation work.

Why it stalls teams

The GC is sold on the value. The CFO is not. And because most GCs have not built the habit of quantifying their function’s output, legal is historically the only function with no operational dashboard, they struggle to frame the investment in terms that land with finance. The conversation becomes “we want to buy technology” rather than “here is the business problem this solves and here is what it costs us not to solve it.”

What high performing teams do

• Reframe the investment as budget reallocation, not new spend. The most successful business cases for legal AI are built around reducing external law firm spend and redirecting that budget. If the platform recovers even a fraction of what is currently going to outside counsel, where only 20% of matters stay within budget (Gartner, 2025), the ROI calculation changes entirely.

• Quantify the cost of the status quo. How many hours does contract execution consume each week? What is the loaded cost of that time? What is the risk exposure from manual compliance tracking? The business case is easier to make when the cost of not acting is clearly priced.

• Start with a scoped pilot, not a full deployment. A focused pilot on one use case, contract review for a specific contract type, with defined success metrics is easier to fund and harder to argue against than an enterprise-wide transformation initiative.

Barrier 6: change resistance from legal teams

Cited by 28% of GCs as a primary barrier

What it is

Lawyers are trained to be sceptical. That is professionally useful. It is also, in the context of AI adoption, a significant implementation risk. Scepticism about AI capabilities, concern about job displacement, and a preference for established ways of working all contribute to resistance, sometimes passive and sometimes explicit, that can undermine implementation regardless of how good the technology is.

Why it stalls teams

Change resistance rarely surfaces as a clear objection. It appears as low engagement with training sessions, reluctance to use the new system for actual work, workarounds that route around the platform, and eventually, a return to old habits. The platform gets paid for. Nobody uses it. The GC reports low adoption to the CFO and the initiative is quietly shelved.

What high performing teams do

• Involve the team in selection, not just implementation. Lawyers who participated in evaluating the platform are significantly more likely to adopt it than those who had it handed to them.

• Frame AI as capacity recovery, not replacement. The 44% of legal work that is technically automatable today, contracts, compliance queries, routine governance, is the work most lawyers find least engaging. Automating it gives lawyers more time for the substantive work they became lawyers to do. That framing changes the conversation.

• Prioritise adoption support alongside technology. The platforms with the highest adoption rates are those that include structured onboarding, role-specific training, and ongoing support as part of the implementation model, not as optional add-ons.

The pattern that separates the 6.7% from everyone else

The teams that have fully operationalised AI, the 6.7% in the Plexus survey, did not overcome these barriers by being better resourced or more technically sophisticated. They broke through by treating implementation as a change management challenge, not a technology challenge.

They cleaned their data before they selected their platform. They involved IT before they needed a security sign-off. They built the CFO business case around cost reallocation, not technology spend. They involved their lawyers in the selection process. And they started with contract review, the use case with the clearest ROI and the lowest risk, before expanding.

The barriers are real. None of them are insurmountable. And the gap between 58.7% adopting and 6.7% operationalised is, in practice, the distance between a plan and an intention.

Source: Plexus Future-Ready General Counsel 2026 Survey, n=150 General Counsels, January 2026. External citations: Thomson Reuters Generative AI in Professional Services Report 2025; ACC/Everlaw GenAI Survey 2025, n=657; Gartner Legal and Compliance Leader research 2025.

Questions? We have answers.

Data quality and readiness is cited most frequently, by 54% of GCs, as the primary barrier to AI adoption. Legal data is typically scattered across multiple systems with inconsistent structure, making it difficult for AI tools to perform reliably without prior data centralisation and clean up.

Most legal teams are 12 to 24 months from full AI operational maturity. Teams that start with a scoped pilot, typically contract review, and invest in data infrastructure and change management in parallel progress significantly faster than those attempting broad deployment from day one.

AI pilots most commonly fail to scale due to poor data infrastructure, inadequate change management, and the absence of a defined operating model. Technology is rarely the limiting factor. Teams that treat piloting as the end goal, rather than the first step in a documented implementation roadmap, consistently fail to convert early wins into lasting operational change.

Contract review and drafting is the recommended starting point, cited by 94% of active AI users in the Plexus survey. It has the clearest ROI, lowest implementation risk, and highest success rate of any legal AI use case, making it the most effective proof of concept for building internal confidence and expanding from there.

The most effective legal AI business cases frame the investment as a reallocation of existing external legal spend rather than new technology cost. Quantifying the cost of current manual processes, in lawyer hours, external counsel fees, and risk exposure, and comparing it against platform cost creates a CFO ready argument that is significantly more persuasive than an innovation narrative.

Andrew Mellett

Andrew Mellett is the Founder and CEO of Plexus, a global leader in AI-powered legal technology. Recognised by the Financial Times and Harvard Business Review for his pioneering work in legal innovation, Andrew leads Plexus’s mission to train digital lawyers, helping the world’s top companies streamline legal operations and scale expertise with artificial intelligence.

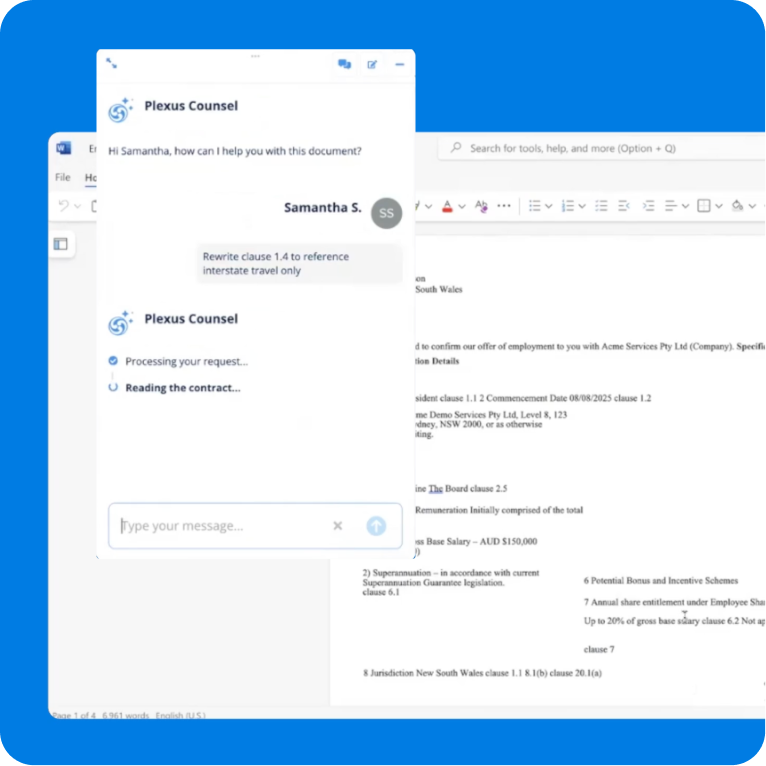

All your legal work in one AI-powered platform

Faster reviews, self-service for business teams, and smarter compliance in every workflow.

Related resources

Why In-House Legal Teams Are Moving Beyond Single-Contract Review

Cadell Falconer

As Head of Product at Plexus, Cadell Falconer brin...

Don't miss out on Perspectives by Plexus each month

Legal news, innovation and insights delivered straight to your inbox.