How general counsels can claim AI governance a why the 8.7% who have done it are having a different board conversation

In most organisations, AI governance belongs to nobody in particular. IT owns the infrastructure. Finance owns the risk appetite. The board wants someone accountable. And the GC, who has the clearest mandate for enterprise risk and regulatory compliance, is not in the room where those decisions are made.

Andrew Mellett

May 13, 2026

CONTENTS

or

The data from the Plexus Future-Ready General Counsel 2026 survey of 150 GCs makes this visible. Only 8.7% of General Counsels currently own AI governance in their organisation. IT and CIO functions own it in 28.7% of organisations. AI governance committees own it in 14.7%. The legal function, despite having unique responsibility for enterprise risk, compliance, and responsible AI deployment, is absent from the governance seat in nine out of ten organisations.

This is not a permanent condition. It is a vacancy. And vacancies in governance, unlike vacancies in headcount, get filled quickly once the right person moves.

This article is for the GC who sees AI governance as a strategic lever and wants to understand what claiming it actually requires. The full research is available in the Plexus Future-Ready General Counsel 2026 report.

|

8.7%

of GCs own AI governance |

28.7%

IT and CIO functions own it |

14.7%

AI governance committees own it |

48%

of GCs cite AI literacy as the defining competency of a future-ready leader |

Source: Plexus Future-Ready General Counsel 2026 Survey, n=150, January 2026

What AI governance ownership actually means for a GC

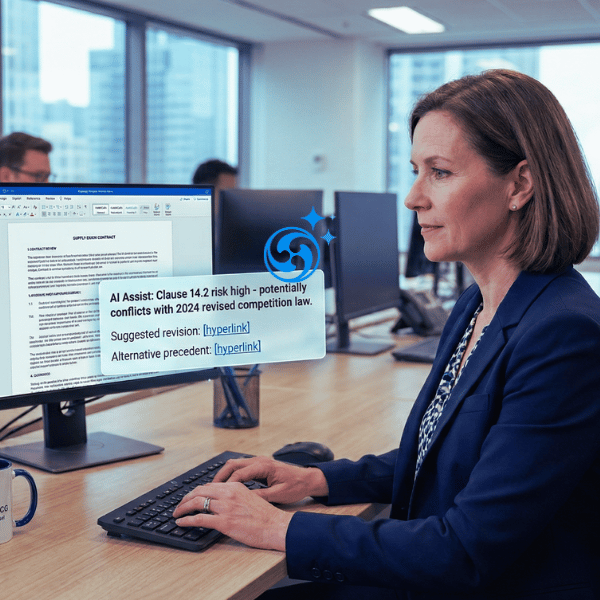

AI governance is not a compliance checklist. It is the organisational function that decides how AI is used, who is accountable when it goes wrong, and what the enterprise's exposure to AI-related risk looks like at any given moment.

For a GC, owning AI governance means three things in practice:

Policy authority

The GC sets the rules for how AI is deployed across the organisation. This includes what systems are approved, what data can be used to train or query them, how AI-generated outputs must be reviewed before use, and what the escalation path is when a system behaves unexpectedly. Without policy authority, governance is just opinion.

Accountability

When an AI system produces a discriminatory decision, a privacy breach, or a regulatory violation, someone needs to answer for it. In organisations where the GC owns governance, that accountability is clear and located in the function best equipped to manage it. In organisations where governance is diffuse or IT-led, accountability tends to be contested, which is its own category of risk.

Visibility

AI governance requires a live picture of what AI systems are operating across the organisation, what they are doing, and whether they are operating within sanctioned parameters. This is not a one-time audit. It is an ongoing operational capability. GCs who own governance have the infrastructure to produce that picture. Those who do not are making claims they cannot evidence.

Why most GCs cede governance to IT by default

The 8.7% figure is striking not because it reflects opposition to GC-led governance, but because it reflects the absence of it. Most GCs have not lost this ground. They never claimed it.

Three dynamics produce this outcome:

AI governance arrives as a technology question

When AI enters an organisation, the initial questions are procurement questions: which system, which vendor, which integration, which cost. These land naturally with IT and with finance. By the time the legal and regulatory implications become visible, a governance structure has already formed around the technology function, and repositioning it requires more effort than establishing it would have.

Legal lacks the operational infrastructure to credibly own it

Claiming governance over AI use across an enterprise requires systems. You need to know what AI tools are in use, where they are being used, what data they are processing, and whether the outputs are being reviewed appropriately. Many legal functions do not have this infrastructure, so even where the GC has governance authority in principle, they cannot exercise it in practice.

This is the most solvable part of the problem. Compliance tracking and contract audit infrastructure, combined with a centralised matter and policy management system, gives the legal function the operational backbone to make governance claims credible.

The GC has not made the case internally

AI governance ownership does not arrive by appointment. It requires the GC to articulate why the legal function is better positioned than IT to own enterprise AI risk, and to do so before a governance crisis makes the question urgent. GCs who have claimed governance did so proactively, in the window before a major incident created a reactive reshuffle of accountability.

What a legal AI governance structure looks like in practice

The governance structures that GCs are building share four common components. None of them require a dedicated legal operations team to implement. They require clarity, systems, and visible accountability.

|

Component |

What it includes |

Why it matters for governance credibility |

|

AI use policy |

Approved systems register, data use rules, output review requirements, prohibited use cases, escalation procedures |

Converts governance from aspiration to enforceable standard. Without a policy, the GC has no authority. |

|

Risk classification |

Tiered risk framework for AI use cases: low risk (approved on policy, no review), medium risk (legal sign-off required), high risk (board escalation), prohibited |

Allows the business to move quickly on low risk AI use while routing consequential decisions through legal. Prevents the governance function from becoming a bottleneck. |

|

Oversight mechanism |

Regular review cadence, AI use register, incident reporting process, cross-functional accountability (legal chairs, IT and product are members) |

Makes governance a live function rather than a document. Demonstrates to the board that AI risk is being actively monitored, not just acknowledged. |

|

Audit and reporting |

Automated compliance tracking, contract and matter audit trails, board-level reporting dashboard, incident log |

Converts governance from a policy exercise into an evidence-based capability. This is what makes the GC's board conversation credible rather than aspirational. |

The audit and reporting component is where most governance frameworks stall. Policy is easy to write. Oversight requires infrastructure. Specifically, it requires a system that centralises contract and matter data, tracks approvals and reviews, and produces a reportable record of how the legal function is managing AI risk across the organisation.

This is precisely what a legal operating system does. The GC who owns governance and operates on a platform that makes compliance visible is in a fundamentally different position from the GC who owns governance in principle but cannot produce evidence of it when the board asks.

The board conversation that governance ownership unlocks

The most significant consequence of AI governance ownership is not internal. It is the conversation it creates with the board.

Boards are asking harder questions about AI than they were two years ago. Geopolitical volatility, regulatory developments including the EU AI Act and emerging Australian AI frameworks, and high-profile AI failures in adjacent industries have moved AI risk from a future concern to a current agenda item.

The GC who does not own governance shows up to these conversations defensively. They can speak to legal risk in the abstract but cannot demonstrate that the organisation's AI use is being actively monitored or that there is a clear accountability chain when something goes wrong.

The GC who owns governance shows up differently. They can answer:

• Which AI systems are currently operating across the business and what they are doing

• What the risk classification of each system is and what oversight applies

• What the incident record looks like and how issues have been resolved

• What the regulatory exposure is under current and emerging AI legislation

• What the organisation's AI governance policy says and how it is being enforced

That is a board-level conversation about enterprise risk, not a legal department update. It is the conversation that positions the GC as a strategic asset rather than a cost centre.

53.3% of GCs surveyed in the Plexus 2026 report have AI governance frameworks that support responsible innovation. The ones who are moving from framework to ownership are the ones who are being invited into strategic conversations they were previously excluded from.

How to make the internal case for GC-led AI governance

Claiming AI governance requires making the case that the legal function is better positioned than IT, finance, or a committee to own it. Here is how the GCs who have done it have approached that argument:

Lead with mandate, not with preference

The GC's claim to AI governance is not based on wanting it. It is based on the legal function's existing mandate for enterprise risk, regulatory compliance, and responsible deployment of technology that creates legal obligations. AI governance is an extension of that mandate, not a new one. Frame it that way in every internal conversation.

Identify the current governance gap explicitly

Most boards and CEOs do not know who owns AI governance in their organisation. When you surface that gap, the response is rarely satisfaction with the status quo. Find the gap, name it, and propose a structure that closes it with the legal function at the centre.

Partner with IT, do not compete with it

The most durable AI governance structures position the GC as the accountability owner and IT as the technical implementer. Legal sets the policy and holds the accountability. IT manages the systems and provides the technical input. This is not a turf conflict. It is a division of expertise. GCs who frame it as a partnership have significantly less internal resistance than those who frame it as a leadership transfer.

Start with a narrow, high-visibility claim

Do not start by claiming governance over all AI use across the enterprise. Start with a category where the legal stakes are clearest: AI used in contract generation, AI used in compliance monitoring, AI used in customer-facing communications. Build the governance structure for that category, demonstrate it to the board, and expand from there.

Build the infrastructure before you make the claim

Governance ownership is only credible when you can evidence it. Before making the claim internally, put the infrastructure in place: a centralised policy, a risk classification framework, an audit trail for AI-related decisions and approvals. A legal operating platform that tracks compliance automatically gives you that evidential base without requiring a dedicated team to build it from scratch.

The 8.7% who own AI governance today did not wait for a governance crisis to make this move. They identified the vacancy before it became a liability and moved into it while the cost of claiming it was still low.

That window does not stay open indefinitely. Regulatory frameworks are tightening. Board attention is intensifying. And the GCs who have already established governance ownership are becoming harder to displace with every passing quarter.

The full data on AI governance, adoption maturity, and the GC to CLO transition is in the Plexus Future-Ready General Counsel 2026 report.

Source: Plexus Future-Ready General Counsel 2026 Survey, n=150 General Counsels, January 2026. External citations: Thomson Reuters Generative AI in Professional Services Report 2025; ACC/Everlaw GenAI Survey 2025, n=657; Gartner Legal and Compliance Leader research 2025.

Questions? We have answers.

AI governance ownership means the GC holds policy authority over how AI is used across the organisation, is accountable when AI systems produce adverse outcomes, and has the operational visibility to monitor AI use in real time. It is distinct from involvement in AI governance committees or providing legal input on AI decisions. Ownership means accountability, not just participation.

AI governance typically lands with IT because AI adoption enters organisations as a technology procurement question. By the time legal and regulatory implications become visible, a governance structure has already formed around the technology function. GCs have ceded governance by default rather than by decision, and most have not yet made the proactive case for repositioning.

A policy sets the rules: what AI systems are approved, what data can be used, what review requirements apply, and what the escalation path is. A framework is the broader structure within which the policy operates, including risk classification, oversight mechanisms, incident reporting, and board reporting cadence. Most organisations have neither. GCs who own governance need both.

The most effective approach is to lead with mandate rather than preference. The legal function already owns enterprise risk and regulatory compliance. AI governance is an extension of that mandate, not a new territory. Surface the current governance gap explicitly, propose a structure that closes it, and position IT as a technical partner rather than a competitor for the accountability seat.

Governance ownership requires operational visibility: a live register of approved AI systems, automated compliance tracking, a contract and matter audit trail that evidences how AI-related decisions are being reviewed and approved, and a board-level reporting capability. Without this infrastructure, governance is a policy document rather than a function. Legal operating platforms that centralise compliance tracking and approval workflows provide this infrastructure without requiring a dedicated build.

Andrew Mellett

Andrew Mellett is the Founder and CEO of Plexus, a global leader in AI-powered legal technology. Recognised by the Financial Times and Harvard Business Review for his pioneering work in legal innovation, Andrew leads Plexus’s mission to train digital lawyers, helping the world’s top companies streamline legal operations and scale expertise with artificial intelligence.

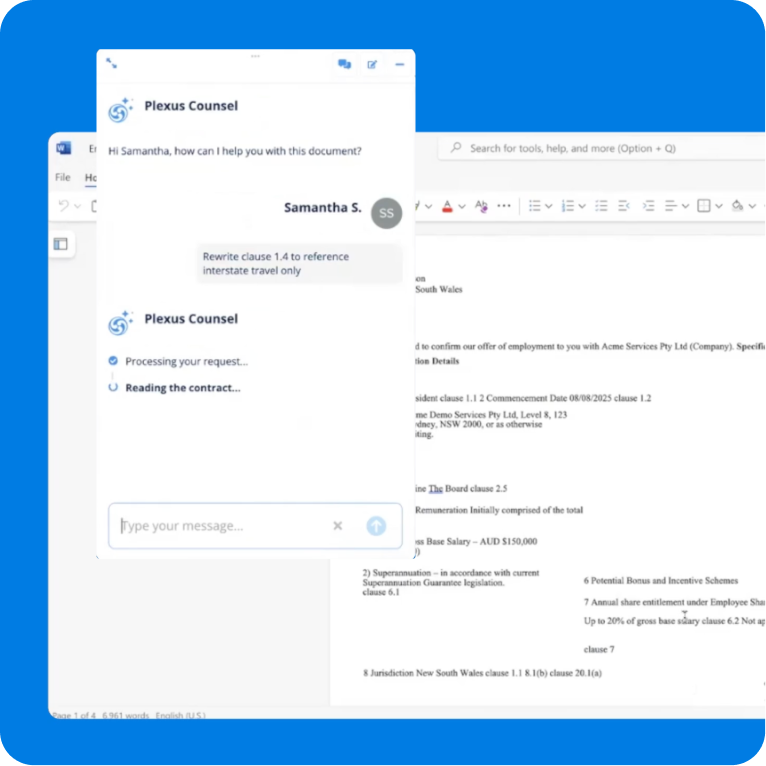

All your legal work in one AI-powered platform

Faster reviews, self-service for business teams, and smarter compliance in every workflow.

Related resources

Why In-House Legal Teams Are Moving Beyond Single-Contract Review

Cadell Falconer

As Head of Product at Plexus, Cadell Falconer brin...

Don't miss out on Perspectives by Plexus each month

Legal news, innovation and insights delivered straight to your inbox.